CIFAR100 image classification (tf.keras:CNN) 画像分類

This datasets has 50000 color images for training and 10000 color images. Regarding class, It’s labeled as follows. Normally we can see many sample of MNIST and CIFAR10 on internet. But kinda seldom to see CIFAR100. So I try to do this.

| Superclass | Classes |

| aquatic mammals | beaver(4), dolphin(30), otter(55), seal(72), whale(95) |

| fish | aquarium fish(1), flatfish(32), ray(67), shark(73), trout(91) |

| flowers | orchids(54), poppies(62), roses(70), sunflowers(82), tulips(92) |

| food containers | bottles(9), bowls(10), cans(16), cups(28), plates(61) |

| fruit and vegetables | apples(0), mushrooms(51), oranges(53), pears(57), sweet peppers(83) |

| household electrical devices | clock(22), computer keyboard(39), lamp(40), telephone(86), television(97) |

| household furniture | bed(5), chair(20), couch(25), table(84), wardrobe(94) |

| insects | bee(6), beetle(7), butterfly(14), caterpillar(18), cockroach(24) |

| large carnivores | bear(3), leopard(42), lion(43), tiger(88), wolf(97) |

| large man-made outdoor things | bridge(12), castle(17), house(37), road(68), skyscraper(76) |

| large natural outdoor scenes | cloud(23), forest(33), mountain(49), plain(60), sea(71) |

| large omnivores and herbivores | camel(15), cattle(19), chimpanzee(21), elephant(31), kangaroo(38) |

| medium-sized mammals | fox(34), porcupine(63), possum(64), raccoon(66), skunk(75) |

| non-insect invertebrates | crab(26), lobster(45), snail(77), spider(79), worm(99) |

| people | baby(2), boy(11), girl(35), man(46), woman(98) |

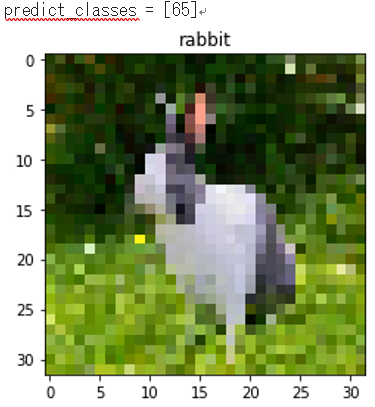

| reptiles | crocodile(27), dinosaur(29), lizard(44), snake(78), turtle(93) |

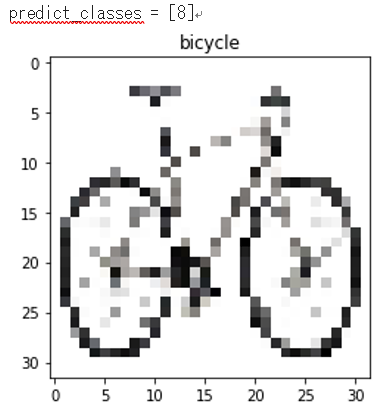

| small mammals | hamster(36), mouse(50), rabbit(65), shrew(74), squirrel(80) |

| trees | maple(47), oak(52), palm(56), pine(59), willow(96) |

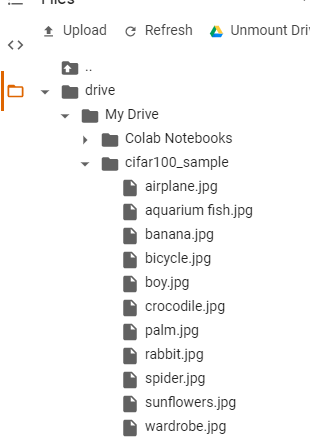

| vehicles 1 | bicycle(8), bus(13), motorcycle(48), pickup truck(58), train(90) |

| vehicles 2 | lawn-mower(41), rocket(69), streetcar(81), tank(85), tractor(89) |

from keras.datasets import cifar100

import matplotlib.pyplot as plt

import numpy as np

# Download dataset of CIFAR-100 (Canadian Institute for Advanced Research)

(x_train,y_train),(x_test,y_test) = cifar100.load_data()

# Check the shape of the array 配列の形を確認

print('x_train shape:', x_train.shape)

print('x_test shape:', x_test.shape)

print('y_train shape:', y_train.shape)

print('y_test shape:', y_test.shape)

# Number of data set samples データセットサンプル数

print(x_train.shape[0], 'train samples')

print(x_test.shape[0], 'test samples')

# Data format confirmation データ形式確認

print(type(x_test))

print(type(y_test[0]))

x_train shape: (50000, 32, 32, 3) x_test shape: (10000, 32, 32, 3) y_train shape: (50000, 100) y_test shape: (10000, 100) 50000 train samples 10000 test samples <class 'numpy.ndarray'> <class 'numpy.ndarray'>

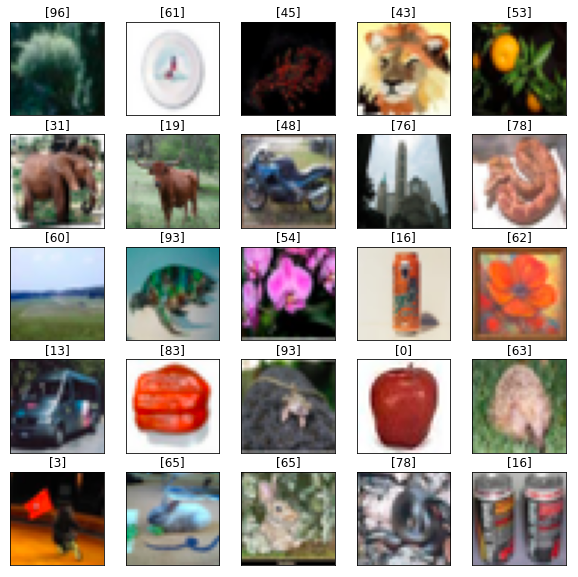

I’ll show some selected images.

# Show sample random image 5x5

plt.figure(figsize=(10,10))

for i in range(25):

rand_num=np.random.randint(0,50000)

cifar_img=plt.subplot(5,5,i+1)

plt.imshow(x_train[rand_num])

# Erase the value of a tick

plt.xticks(color="None")

plt.yticks(color="None")

# Erase the tick x-axis and y-axis

plt.tick_params(length=0)

# Show correct label

plt.title(y_train[rand_num])

plt.show()

If possbile, want to show class name next to number. It is kind of difficult.

from keras.models import Sequential

from keras.layers.convolutional import Conv2D

from keras.layers.pooling import MaxPool2D

from keras.layers.core import Dense,Activation,Dropout,Flatten

from keras.utils import np_utils

import tensorflow as tf

from tensorflow.keras import datasets, layers, models, optimizers

# Normalize the image in a range of 0-1

x_train = x_train.astype('float32')/255.0

x_test = x_test.astype('float32')/255.0

# Convert correct answer labels of y_train,y_test to One-Hot

y_train = np_utils.to_categorical(y_train,100)

y_test = np_utils.to_categorical(y_test,100)

# Create Model(CNN + Dropout)

model = Sequential()

model.add(Conv2D(32,(3,3),padding='same',input_shape=(32,32,3)))

model.add(Activation('relu'))

model.add(Conv2D(32,(3,3),padding='same'))

model.add(Activation('relu'))

model.add(MaxPool2D(pool_size=(2,2)))

model.add(Dropout(0.25))

model.add(Conv2D(64,(3,3),padding='same'))

model.add(Activation('relu'))

model.add(Conv2D(64,(3,3),padding='same'))

model.add(Activation('relu'))

model.add(MaxPool2D(pool_size=(2,2)))

model.add(Dropout(0.25))

model.add(Flatten())

model.add(Dense(512))

model.add(Activation('relu'))

model.add(Dropout(0.5))

model.add(Dense(100,activation='softmax'))

model.compile(optimizer='adam',loss='categorical_crossentropy',metrics=['accuracy'])

model.summary()

# Training(Epoch 100 will take over 8 hours using GPU on Google Colab)

history = model.fit(x_train,y_train,batch_size=128,nb_epoch=100,verbose=1)

# Save model and weights as json file

json_string = model.to_json()

open('cifar100_cnn.json',"w").write(json_string)

# Save weights as h5 file

model.save_weights('cifar100_cnn.h5')

# Evaluation

score = model.evaluate(x_test,y_test,verbose=0)

print('Test loss:',score[0])

print('Test accuracy:',score[1])

print("テスト損失係数 : " + str(score[0]) + ", テスト正解率 : " + str(score[1]*100) + "% ")

/usr/local/lib/python3.6/dist-packages/ipykernel_launcher.py:48: UserWarning: The `nb_epoch` argument in `fit` has been renamed `epochs`. Epoch 1/100 50000/50000 [==============================] - 256s 5ms/step - loss: 4.0701 - accuracy: 0.0756 Epoch 2/100 50000/50000 [==============================] - 255s 5ms/step - loss: 3.4099 - accuracy: 0.1840 Epoch 3/100 50000/50000 [==============================] - 254s 5ms/step - loss: 3.0595 - accuracy: 0.2481 Epoch 4/100 50000/50000 [==============================] - 254s 5ms/step - loss: 2.8184 - accuracy: 0.2943 Epoch 5/100 50000/50000 [==============================] - 254s 5ms/step - loss: 2.6257 - accuracy: 0.3345 Epoch 6/100 50000/50000 [==============================] - 252s 5ms/step - loss: 2.4737 - accuracy: 0.3634 Epoch 7/100 50000/50000 [==============================] - 253s 5ms/step - loss: 2.3438 - accuracy: 0.3901 Epoch 8/100 50000/50000 [==============================] - 252s 5ms/step - loss: 2.2424 - accuracy: 0.4094 Epoch 9/100 50000/50000 [==============================] - 252s 5ms/step - loss: 2.1485 - accuracy: 0.4309 Epoch 10/100 50000/50000 [==============================] - 252s 5ms/step - loss: 2.0630 - accuracy: 0.4453 Epoch 11/100 50000/50000 [==============================] - 253s 5ms/step - loss: 1.9986 - accuracy: 0.4614 Epoch 12/100 50000/50000 [==============================] - 254s 5ms/step - loss: 1.9237 - accuracy: 0.4774 Epoch 13/100 50000/50000 [==============================] - 258s 5ms/step - loss: 1.8693 - accuracy: 0.4896 Epoch 14/100 50000/50000 [==============================] - 252s 5ms/step - loss: 1.8001 - accuracy: 0.5015 Epoch 15/100 50000/50000 [==============================] - 257s 5ms/step - loss: 1.7608 - accuracy: 0.5115 Epoch 16/100 50000/50000 [==============================] - 251s 5ms/step - loss: 1.7068 - accuracy: 0.5252 Epoch 17/100 50000/50000 [==============================] - 250s 5ms/step - loss: 1.6556 - accuracy: 0.5380 Epoch 18/100 50000/50000 [==============================] - 249s 5ms/step - loss: 1.6142 - accuracy: 0.5443 Epoch 19/100 50000/50000 [==============================] - 249s 5ms/step - loss: 1.5842 - accuracy: 0.5496 Epoch 20/100 50000/50000 [==============================] - 249s 5ms/step - loss: 1.5246 - accuracy: 0.5675 Epoch 21/100 50000/50000 [==============================] - 249s 5ms/step - loss: 1.4986 - accuracy: 0.5715 Epoch 22/100 50000/50000 [==============================] - 249s 5ms/step - loss: 1.4632 - accuracy: 0.5790 Epoch 23/100 50000/50000 [==============================] - 248s 5ms/step - loss: 1.4209 - accuracy: 0.5890 Epoch 24/100 50000/50000 [==============================] - 254s 5ms/step - loss: 1.3752 - accuracy: 0.6008 Epoch 25/100 50000/50000 [==============================] - 248s 5ms/step - loss: 1.3655 - accuracy: 0.6031 Epoch 26/100 50000/50000 [==============================] - 251s 5ms/step - loss: 1.3341 - accuracy: 0.6080 Epoch 27/100 50000/50000 [==============================] - 248s 5ms/step - loss: 1.3038 - accuracy: 0.6156 Epoch 28/100 50000/50000 [==============================] - 250s 5ms/step - loss: 1.2783 - accuracy: 0.6260 Epoch 29/100 50000/50000 [==============================] - 256s 5ms/step - loss: 1.2503 - accuracy: 0.6305 Epoch 30/100 50000/50000 [==============================] - 251s 5ms/step - loss: 1.2339 - accuracy: 0.6358 Epoch 31/100 50000/50000 [==============================] - 255s 5ms/step - loss: 1.2035 - accuracy: 0.6445 Epoch 32/100 50000/50000 [==============================] - 249s 5ms/step - loss: 1.1765 - accuracy: 0.6509 Epoch 33/100 50000/50000 [==============================] - 248s 5ms/step - loss: 1.1577 - accuracy: 0.6563 Epoch 34/100 50000/50000 [==============================] - 249s 5ms/step - loss: 1.1434 - accuracy: 0.6601 Epoch 35/100 50000/50000 [==============================] - 251s 5ms/step - loss: 1.1232 - accuracy: 0.6645 Epoch 36/100 50000/50000 [==============================] - 243s 5ms/step - loss: 1.1038 - accuracy: 0.6688 Epoch 37/100 50000/50000 [==============================] - 251s 5ms/step - loss: 1.0975 - accuracy: 0.6720 Epoch 38/100 50000/50000 [==============================] - 249s 5ms/step - loss: 1.0757 - accuracy: 0.6769 Epoch 39/100 50000/50000 [==============================] - 247s 5ms/step - loss: 1.0605 - accuracy: 0.6796 Epoch 40/100 50000/50000 [==============================] - 248s 5ms/step - loss: 1.0439 - accuracy: 0.6852 Epoch 41/100 50000/50000 [==============================] - 254s 5ms/step - loss: 1.0456 - accuracy: 0.6829 Epoch 42/100 50000/50000 [==============================] - 251s 5ms/step - loss: 1.0284 - accuracy: 0.6899 Epoch 43/100 50000/50000 [==============================] - 251s 5ms/step - loss: 1.0088 - accuracy: 0.6946 Epoch 44/100 50000/50000 [==============================] - 258s 5ms/step - loss: 1.0039 - accuracy: 0.6965 Epoch 45/100 50000/50000 [==============================] - 254s 5ms/step - loss: 0.9966 - accuracy: 0.6985 Epoch 46/100 50000/50000 [==============================] - 259s 5ms/step - loss: 0.9786 - accuracy: 0.7035 Epoch 47/100 50000/50000 [==============================] - 256s 5ms/step - loss: 0.9642 - accuracy: 0.7055 Epoch 48/100 50000/50000 [==============================] - 255s 5ms/step - loss: 0.9533 - accuracy: 0.7107 Epoch 49/100 50000/50000 [==============================] - 258s 5ms/step - loss: 0.9419 - accuracy: 0.7134 Epoch 50/100 50000/50000 [==============================] - 254s 5ms/step - loss: 0.9328 - accuracy: 0.7151 Epoch 51/100 50000/50000 [==============================] - 255s 5ms/step - loss: 0.9247 - accuracy: 0.7186 Epoch 52/100 50000/50000 [==============================] - 255s 5ms/step - loss: 0.9303 - accuracy: 0.7158 Epoch 53/100 50000/50000 [==============================] - 256s 5ms/step - loss: 0.9146 - accuracy: 0.7216 Epoch 54/100 50000/50000 [==============================] - 256s 5ms/step - loss: 0.9121 - accuracy: 0.7255 Epoch 55/100 50000/50000 [==============================] - 256s 5ms/step - loss: 0.8899 - accuracy: 0.7279 Epoch 56/100 50000/50000 [==============================] - 260s 5ms/step - loss: 0.9003 - accuracy: 0.7278 Epoch 57/100 50000/50000 [==============================] - 257s 5ms/step - loss: 0.8719 - accuracy: 0.7349 Epoch 58/100 50000/50000 [==============================] - 258s 5ms/step - loss: 0.8678 - accuracy: 0.7369 Epoch 59/100 50000/50000 [==============================] - 262s 5ms/step - loss: 0.8679 - accuracy: 0.7346 Epoch 60/100 50000/50000 [==============================] - 256s 5ms/step - loss: 0.8613 - accuracy: 0.7382 Epoch 61/100 50000/50000 [==============================] - 261s 5ms/step - loss: 0.8543 - accuracy: 0.7383 Epoch 62/100 50000/50000 [==============================] - 257s 5ms/step - loss: 0.8394 - accuracy: 0.7440 Epoch 63/100 50000/50000 [==============================] - 259s 5ms/step - loss: 0.8298 - accuracy: 0.7451 Epoch 64/100 50000/50000 [==============================] - 258s 5ms/step - loss: 0.8249 - accuracy: 0.7478 Epoch 65/100 50000/50000 [==============================] - 253s 5ms/step - loss: 0.8289 - accuracy: 0.7480 Epoch 66/100 50000/50000 [==============================] - 238s 5ms/step - loss: 0.8209 - accuracy: 0.7479 Epoch 67/100 50000/50000 [==============================] - 233s 5ms/step - loss: 0.8145 - accuracy: 0.7493 Epoch 68/100 50000/50000 [==============================] - 234s 5ms/step - loss: 0.8098 - accuracy: 0.7515 Epoch 69/100 50000/50000 [==============================] - 232s 5ms/step - loss: 0.7895 - accuracy: 0.7570 Epoch 70/100 50000/50000 [==============================] - 230s 5ms/step - loss: 0.7986 - accuracy: 0.7555 Epoch 71/100 50000/50000 [==============================] - 233s 5ms/step - loss: 0.7833 - accuracy: 0.7578 Epoch 72/100 50000/50000 [==============================] - 232s 5ms/step - loss: 0.7896 - accuracy: 0.7573 Epoch 73/100 50000/50000 [==============================] - 230s 5ms/step - loss: 0.7820 - accuracy: 0.7579 Epoch 74/100 50000/50000 [==============================] - 234s 5ms/step - loss: 0.7679 - accuracy: 0.7630 Epoch 75/100 50000/50000 [==============================] - 228s 5ms/step - loss: 0.7712 - accuracy: 0.7616 Epoch 76/100 50000/50000 [==============================] - 228s 5ms/step - loss: 0.7625 - accuracy: 0.7640 Epoch 77/100 50000/50000 [==============================] - 229s 5ms/step - loss: 0.7537 - accuracy: 0.7674 Epoch 78/100 50000/50000 [==============================] - 230s 5ms/step - loss: 0.7542 - accuracy: 0.7667 Epoch 79/100 50000/50000 [==============================] - 229s 5ms/step - loss: 0.7507 - accuracy: 0.7678 Epoch 80/100 50000/50000 [==============================] - 229s 5ms/step - loss: 0.7522 - accuracy: 0.7693 Epoch 81/100 50000/50000 [==============================] - 231s 5ms/step - loss: 0.7455 - accuracy: 0.7706 Epoch 82/100 50000/50000 [==============================] - 232s 5ms/step - loss: 0.7447 - accuracy: 0.7699 Epoch 83/100 50000/50000 [==============================] - 229s 5ms/step - loss: 0.7349 - accuracy: 0.7747 Epoch 84/100 50000/50000 [==============================] - 229s 5ms/step - loss: 0.7325 - accuracy: 0.7751 Epoch 85/100 50000/50000 [==============================] - 233s 5ms/step - loss: 0.7292 - accuracy: 0.7754 Epoch 86/100 50000/50000 [==============================] - 231s 5ms/step - loss: 0.7221 - accuracy: 0.7783 Epoch 87/100 50000/50000 [==============================] - 233s 5ms/step - loss: 0.7124 - accuracy: 0.7789 Epoch 88/100 50000/50000 [==============================] - 241s 5ms/step - loss: 0.7331 - accuracy: 0.7752 Epoch 89/100 50000/50000 [==============================] - 234s 5ms/step - loss: 0.7073 - accuracy: 0.7824 Epoch 90/100 50000/50000 [==============================] - 236s 5ms/step - loss: 0.7111 - accuracy: 0.7807 Epoch 91/100 50000/50000 [==============================] - 232s 5ms/step - loss: 0.7146 - accuracy: 0.7803 Epoch 92/100 50000/50000 [==============================] - 231s 5ms/step - loss: 0.7144 - accuracy: 0.7805 Epoch 93/100 50000/50000 [==============================] - 233s 5ms/step - loss: 0.6896 - accuracy: 0.7867 Epoch 94/100 50000/50000 [==============================] - 231s 5ms/step - loss: 0.6911 - accuracy: 0.7871 Epoch 95/100 50000/50000 [==============================] - 229s 5ms/step - loss: 0.7040 - accuracy: 0.7825 Epoch 96/100 50000/50000 [==============================] - 228s 5ms/step - loss: 0.6815 - accuracy: 0.7888 Epoch 97/100 50000/50000 [==============================] - 231s 5ms/step - loss: 0.6780 - accuracy: 0.7902 Epoch 98/100 50000/50000 [==============================] - 229s 5ms/step - loss: 0.6684 - accuracy: 0.7935 Epoch 99/100 50000/50000 [==============================] - 231s 5ms/step - loss: 0.6759 - accuracy: 0.7902 Epoch 100/100 50000/50000 [==============================] - 229s 5ms/step - loss: 0.6817 - accuracy: 0.7905 Model: "sequential_1" _________________________________________________________________ Layer (type) Output Shape Param # ================================================================= conv2d_1 (Conv2D) (None, 32, 32, 32) 896 _________________________________________________________________ activation_1 (Activation) (None, 32, 32, 32) 0 _________________________________________________________________ conv2d_2 (Conv2D) (None, 32, 32, 32) 9248 _________________________________________________________________ activation_2 (Activation) (None, 32, 32, 32) 0 _________________________________________________________________ max_pooling2d_1 (MaxPooling2 (None, 16, 16, 32) 0 _________________________________________________________________ dropout_1 (Dropout) (None, 16, 16, 32) 0 _________________________________________________________________ conv2d_3 (Conv2D) (None, 16, 16, 64) 18496 _________________________________________________________________ activation_3 (Activation) (None, 16, 16, 64) 0 _________________________________________________________________ conv2d_4 (Conv2D) (None, 16, 16, 64) 36928 _________________________________________________________________ activation_4 (Activation) (None, 16, 16, 64) 0 _________________________________________________________________ max_pooling2d_2 (MaxPooling2 (None, 8, 8, 64) 0 _________________________________________________________________ dropout_2 (Dropout) (None, 8, 8, 64) 0 _________________________________________________________________ flatten_1 (Flatten) (None, 4096) 0 _________________________________________________________________ dense_1 (Dense) (None, 512) 2097664 _________________________________________________________________ activation_5 (Activation) (None, 512) 0 _________________________________________________________________ dropout_3 (Dropout) (None, 512) 0 _________________________________________________________________ dense_2 (Dense) (None, 100) 51300 ================================================================= Total params: 2,214,532 Trainable params: 2,214,532 Non-trainable params: 0 _________________________________________________________________ Test loss: 2.4145295867919923 Test accuracy: 0.47380000352859497 テスト損失係数 : 2.4145295867919923, テスト正解率 : 47.3800003528595%

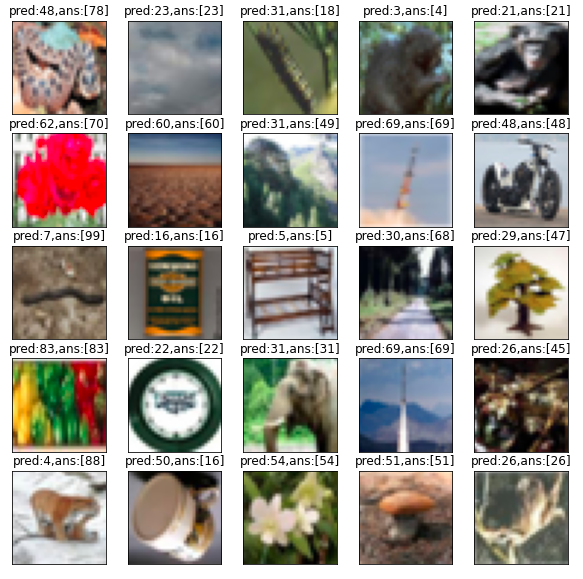

In order to get more accuracy, it might need to add more images using ImageDataGenerator or something. But it’s taking too long for training anyway. As per above,even if use GPU, just 100 epoch already took 6 hours for training. Next, I will use the test images to verify that each model’s predictions and class of images are correct or not.

# Prediction for 10000 test image class using predict_classes()

img_pred = model.predict_classes(x_test)

plt.figure(figsize=(10,10))

for i in range(25):

rand_num=np.random.randint(0,10000)

cifar_img=plt.subplot(5,5,i+1)

plt.imshow(x_test_img[rand_num])

# Erase the value of a tick

plt.xticks(color="None")

plt.yticks(color="None")

# Erase the tick x-axis and y-axis

plt.tick_params(length=0)

# Image Prediction pred:がモデルから予測された結果、ans:が正解

plt.title('pred:{0},ans:{1}'.format(img_pred[rand_num],y_test_img[rand_num]))

plt.show()

11 out of 25 seems are not correct. Test accuracy was almost 50%. Slightly better result. At last, I searched some photos in internet and used those for predictions. Uploading to Google Drive like below.

# Download an image from the internet and will read the saved image, shapes it appropriately, and predicts the class using learned model

from keras.models import model_from_json

import matplotlib.pyplot as plt

from keras.preprocessing.image import img_to_array, load_img

import glob

files = glob.glob("/content/drive/My Drive/cifar100_sample/*")

for i in files:

# load_img() function adjust the size and shape of a JPEG or PNG image for keras

temp_img=load_img(i, target_size=(32,32))

# Converts image to an array and normalizes to 0-1

temp_img_array=img_to_array(temp_img)

temp_img_array=temp_img_array.astype('float32')/255.0

temp_img_array=temp_img_array.reshape((1,32,32,3))

# Image prediction

img_pred=model.predict_classes(temp_img_array)

print('\npredict_classes =',img_pred)

plt.imshow(temp_img)

plt.title(i.replace('/content/drive/My Drive/cifar100_sample/', '').replace('.jpg', ''))

plt.show()

Not correct : Crocodile photo is recognized by motorcycle(48)

Not correct : Boy photo is recognized by palm tree(56). It is funny. This is boy photo, But his hair looks like tree. That’s why this is recognized to palm tree…

Airplane photo is recognized by whale

Banana photo is recognized by Clock

Not correct : Wardrobe photo is recognized by leopard(42).

Not correct : Aquarium fish photo is recognized by tulips(92).

4 out of 11 are wrong, so I guess it’s a good result. Additionally, 2 results for Banana and Airplane is not classified on this datasets. Just joking what class will be assigned to. It is required to improve accuracy more for this datasets. Thanks.